Volleyball Analytics for real-time decisions

- #AI

- #Audio/video analytics

- #Computer vision

- #Data science

- #Image classification

- #Machine learning

About the Client

A client is the United States Air Force volleyball head coach. He wanted to incorporate AI technologies into analytics production. It might help him to be able to change the game strategy on the fly.

Business Challenge

Recognizing human activities from the video streams is still challenging for researchers due to several issues such as background clutter, changes in scale, viewpoint, lighting, and appearance. It also takes a lot of processing time and results in high costs.

Our client’s goal was to detect the actions of volleyball players with the usage of only 1 iPhone 12 in close to real-time. He wanted to make this process of serve classification quick and cost-effective.

The client aimed to create automated match processing with iPhone 12 for coaches.

Solution Overview

The project’s purpose was to build the ML model that can classify volleyball serve on a video with an F-1-score of 0.85.

The main requirements from our client were to optimize the whole system to perform in the near time of execution and integrate the model into IOS 12 as well.

Until the end of the project, our team got the model for serve detection on volleyball games that works in real-time speed (26 FPS) with an F1-score of 0.87.

Overall, the whole project took six weeks to prepare the dataset, train, and integrate the model into iPhone 12.

Project Description

Action Classifier

Our first assumption was that the ActionClassifier from createML should resolve our problem. During the experiments, we faced the issue that Action Classifier works perfectly with situations when only one person is present on the screen. Since we sometimes have hidden players, we couldn’t find a way to work with multi-person action classification using MLActionClassifier. In conclusion, this method wasn’t suitable for our needs.

Image Classifier

Our second approach was to use another tool from createML – ImageClassifier. We had a dataset with visible and invisible serves – that is why we decided to try event classification instead of action classification. In other words, we planned to classify images according to the positions of all players since some images could clarify how players’ positions relate to serve.

The algorithm was the following:

- Organize the train dataset directory by locating every class of images in a specific directory.

- Organize test dataset directory. Do the same as for the training set.

- Create an ImageClassification project.

- Configure training set, set up augmentations, and set the number of epochs.

- Train the ImageClassifier using images’ features to train the model and check its accuracy iteratively.

- Save the model and use it in the XCode project.

However, we have also faced some issues on this step, namely:

- The accuracy of the image classifier was not high enough. We obtained about 70% accuracy on the serve detection.

- Since our client aimed the whole system based on native tools for Mac OS, time to train the model was high – more than 10 hours on a Macbook using a dataset with about 15000 samples. To compare – the same training procedure on GPU takes about 20-30 minutes.

In addition, the camera was placed on the back of the home team, and we faced such an issue that sometimes we couldn’t see the player who did the serve as he was out of the line of the camera’s sight.

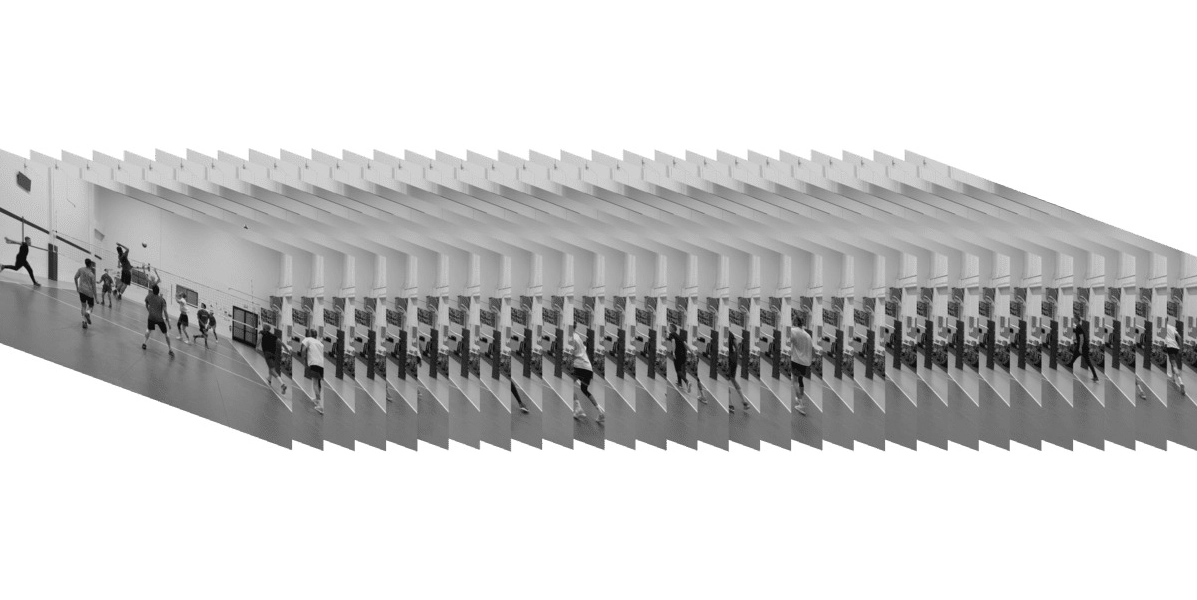

How did we solve these problems? We decided to continue with the classification problem, but for now, we prepared a dataset where each sample is a set of 30 sequential grayscale frames. The output is as usual – label of class. After training the model using the Keras framework, we converted it to the .mlmodel. The final score was about 0.87 (F-1-score).

Read the full story by the link.

Let's discuss your idea!

Technological Details

We used TensorFlow to build a model and CoreMLTools Python Lib to convert the TensorFlow model into CoreML suitable format. To integrate our model into IOS 12, we used the CoreML framework and XCode IDE.