2026 Satellite Imagery Landscape: Selecting the Right Imagery Specs and Provider for GeoAI

Anastasiia Dolgaryeva

Delivery Manager

Most procurement decisions for satellite imagery still revolve around the highest resolution available. For years, that logic made sense. When 30cm spaceborne imagery was the limit, more detail almost always meant better analytical outcomes.

But in 2026, with 10cm native imagery now commercially accessible, the narrative has changed. Teams building GeoAI and machine learning projects, stop chasing the clearest picture and start pursuing the lowest cost-per-insight. Beyond the cost of storage and labeling pipelines, ultra-fine spatial resolution can introduce noise that complicates convergence. More on this later.

This guide continues the discussion we started in our 2021 analysis of satellite imagery resolution for different use cases and extends it to AI training workflows. Here, we’ll cover how to select the right GSD for ML pipelines, compare leading satellite imagery providers, and discuss which legal constraints exist regarding high-resolution remote sensing data.

Two Metrics That Define Satellite Data Usability: GSD and Accuracy

When satellite data vendors advertise “30cm resolution,” they’re describing Ground Sampling Distance (GSD) – the real-world area captured by a single pixel. Often conflated with image sharpness or quality, a 30cm GSD means one pixel covers 30 x 30cm on the ground. A lower number indicates greater spatial detail and vice versa.

Satellite imagery resolution comes in two formats.

- Native data is the original resolution captured by the satellite sensor. It preserves raw spectral fidelity vital for vegetation or mineral indices and is preferred for AI models requiring spectral stability and precise geometry. It’s also the standard for 3D modeling and engineering tasks that depend on accurate pointing.

- HD (high-definition) data uses mathematical models to increase the pixel density. A native 15cm satellite imagery can be enhanced to 5cm HD, letting AI algorithms detect critical infrastructure defects like micro-fissures in bridge spans.

Yet, one small nuance has a big impact on model performance – geographic accuracy. A pixel-perfect image that’s positioned 3 meters from its true location can disrupt any AI-ready data pipeline. In other words, even a minor georegistration error in two acquisitions of the same area, and the model shows construction that doesn’t exist or misses deforestation that does.

Mapping the 2026 Optical Resolution Tiers in the Satellite Market

You must align your model’s requirements with the appropriate resolution to ensure performance and scalability. Here are the four tiers, and each one draws a hard line around what your model can and cannot detect.

However, optical imagery has one critical limitation: it depends on clear skies and daylight.

The Weather-Proof Alternative: SAR

Optical resolution is useless if your area of interest spends months under cloud cover. For projects in the tropics, high latitudes, or regions with persistent atmospheric interference like smoke or haze, SAR imagery is the unique solution.

SAR (Synthetic Aperture Radar) is an active remote sensing technology that doesn’t rely on reflected sunlight to capture images as optical sensors do. The satellite emits microwave pulses and measures the return signal, so neither atmospheric conditions nor time of day is an obstacle. The sensor powering Sentinel-1 operates on exactly this principle, delivering reliable earth observation data.

For years, SAR had one major weakness. The raw output appears grainy and difficult to interpret without specialist training. Just recently, that’s changed. SAR-to-Optical Image Translation uses deep learning to reconstruct more intuitive visuals, enabling analysts and ML pipelines to work with temporally continuous, weather-independent data streams.

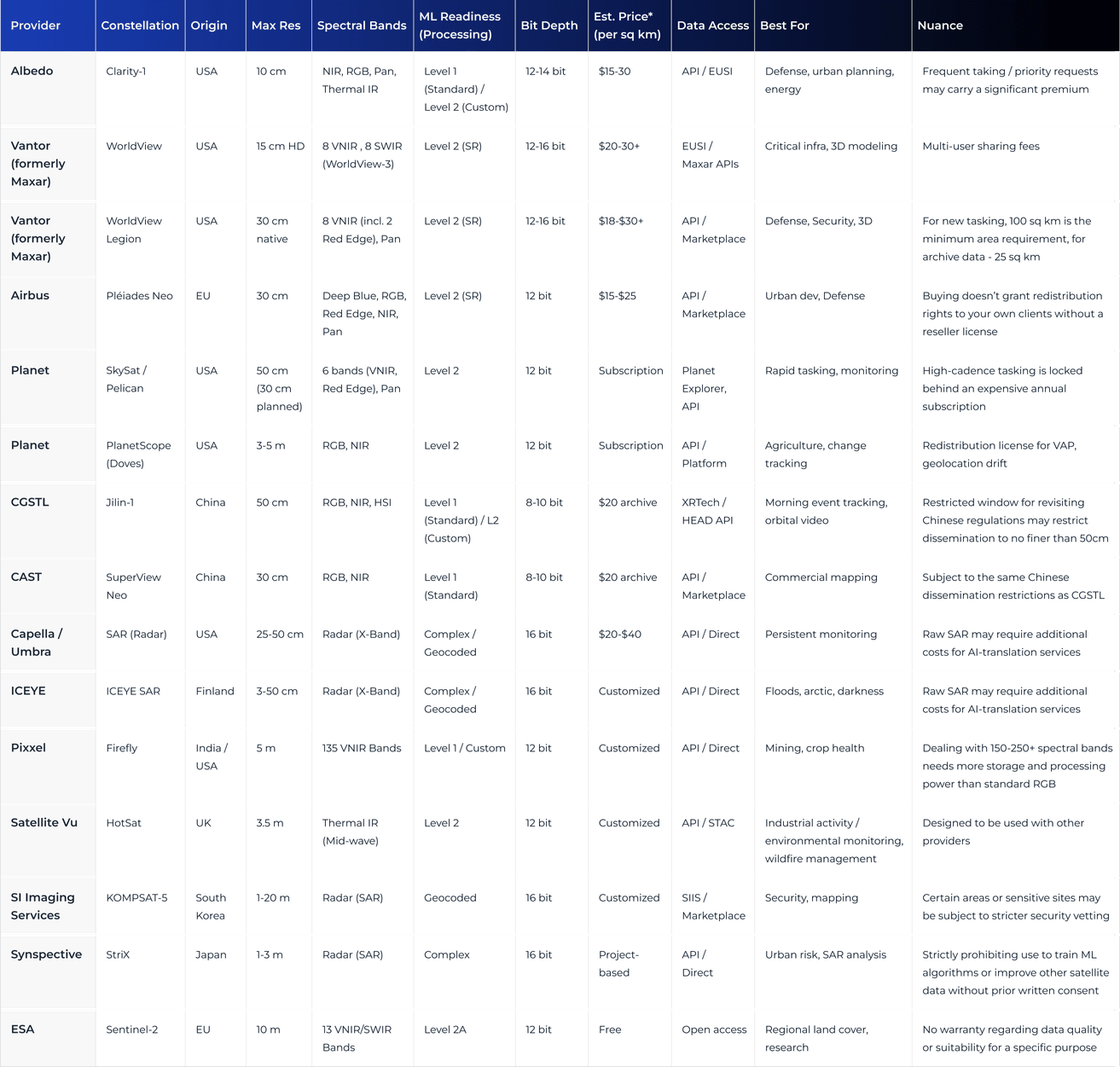

The 2026 Global Satellite Image Provider Comparison

The AI-Readiness Framework: How to Choose Satellite Data for ML

In GeoAI analytics, a sharp image and a useful image are not the same thing. Satellite data is AI-ready when models extract the same signal over time, across sensors and geographies. That requires Analytics-Ready Data (ARD), which is radiometrically corrected, geometrically aligned, and delivered via standardized backends.

To build scalable AI models, choose resolution purposefully based on target size and industry requirements. Use object-dominant models when detecting assets like vehicles or houses, and feature-dominant models when analyzing regional trends where mixed pixels provide noise-free signatures.

For instance, in our Clearcut deforestation platform, we selected Sentinel-2 because its 10m resolution stabilizes spectral signatures, outperforming higher-res sensors for land-cover classification. Similarly, our precision agriculture platform leverages spectral indices to monitor crop health at a regional level.

But GSD is only one piece of the puzzle. True Earth observation AI readiness requires two more:

- Temporal revisit determines how well models capture transient events or phenological changes. Daily 3m imagery is far more valuable for tracking illegal mining operations than monthly 30cm imagery.

- Spectral resolution uses diagnostic bands like red-edge and SWIR to identify mineral compositions or soil moisture that RGB cannot detect. Hyperspectral satellite data, such as Pixxel’s Firefly constellation, takes this further, capturing hundreds of narrow spectral bands that reveal material fingerprints.

Altogether, the best strategy is to find the minimum viable pixel that meets your F1-score requirements, the mathematical balance between capturing all targets and avoiding false positives. Overspecifying resolution rarely improves model performance but increases compute costs, preprocessing overhead, and geometric sensitivity that moderate-resolution imagery handles more robustly.

In this formula, precision is the percentage of correctly identified objects, and recall is the total number of objects found. The closer to 100%, the better the model balances accuracy with thoroughness.

The Compliance Side of Satellite Imagery

Accessing high-resolution satellite imagery for a specific Area of Interest requires buyers to clear a layered set of regulatory hurdles. It varies based on the location, sensor type, and operator jurisdiction.

U.S. providers operate under NGA and ITAR frameworks, while EU-based companies must comply with GDPR. Both sets of regulations restrict high-res data resale for sensitive areas, governing who can receive imagery and under what conditions, particularly regarding national security and privacy.

The NOAA tiered system further classifies providers by technological uniqueness, with higher-tier systems subject to potential shutter control. In some cases, companies must undergo thorough personnel vetting to access restricted high-res data.

Geopolitics has its complications. Chinese providers may offer 30cm imagery at half the cost of Western equivalents, but for any U.S. federal, EU-funded, or critical national infrastructure projects, that data is off the table. Legal prohibitions against using non-NATO data in these contexts are enforceable and frequently discovered too late in the procurement process.

Licensing also adds barriers to entry. Buying imagery doesn’t automatically grant rights to share, embed, or use it for large-scale ML training. Most providers default to single-user licenses, while broader usage rights come with a premium price tag.

Teams building geospatial solutions should align their procurement strategy with their end-client’s security protocols. In 2026, data origin is as critical as its spectral accuracy.

The New Data Delivery Standard for 2026

Historically, acquiring satellite imagery was a tedious and time-consuming process, involving manual sourcing and file downloading.

Today, thanks to the adoption of the STAC (SpatioTemporal Asset Catalog) and regulatory modernization, ML projects can leverage API-first tasking to access real-time Earth observations and integrate into automated pipelines.

Another development, cloud-optimized GeoTIFFs, allows users to stream only the specific portion of a file they need. For high-cadence use cases like field boundary detection, a cloud-native approach is the ultimate cost-optimizer and automation enabler.

Final Takeaways

The through-line of this guide is: resolution is a tool, and its value depends on whether it matches the job. Ultra-high-res imagery in regional modeling is a strategic mistake, where you’re paying top dollar for geometric sensitivity and processing friction that degrades the performance a moderate-resolution dataset could deliver.

Our experience across precision farming, crop stress modeling, and large-scale deforestation tracking helps us pinpoint where expensive imagery earns its cost, and where it doesn’t. We start with the ML task, define the minimum GSD, and design a lean pipeline around it. If satellite data isn’t the right fit, we’ll tell you that too. Contact us to find the answers to your geospatial strategy questions.